Recursive Language Models are a new strategy for dealing with long context problems. We've implemented them in DSPy so you can quickly and easily try them with your existing DSPy programs or with new tasks.

Many of us are familiar with the perils of context rot. As our contexts grow, LLM performance drops significantly for many types of tasks. For agentic and exploration tasks this is particularly problematic, as our context grows the longer the agent works.

Recursive Language Models, a new strategy developed by Alex Zhang and Omar Khattab, addresses the context rot problem by providing LLMs with a separate environment to store information (in this case, a Python instance), from which the LLM can dynamically load context into the token space as needed. This environment is persisted and shared among subagents, allowing the LLM to ask questions about and explore the information without loading it into its main context.

This simple harness - a shared environment where LLMs can recursively interact with input context as variables - proves to be incredibly effective when dealing with very large inputs. We've used RLMs to summarize hundreds of megabytes of logs, perform coding tasks across massive multi-project codebases, and source evidence across a large collection of books.

We have implemented the RLM pattern in DSPy, allowing you to quickly and easily try RLMs with your existing DSPy programs or with new tasks. Today we're going to walk through how RLMs work, to establish a mental model for when and how might want to apply them, then get you up and running with an example in DSPy.

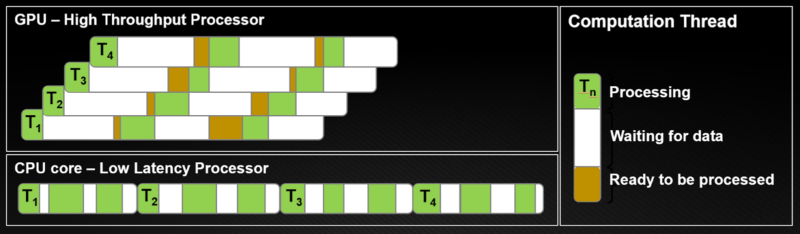

RLMs Manage Two Buckets of Context

RLMs work by providing an LLM with a REPL-like interface (think: a Jupyter Notebook), where they can explore, analyze, and load information by writing Python code. There is the variable space (the information stored in the REPL) and the token space (the context extracted from the variable space).

In a normal coding agent, you might provide the following context:

Your inputs are the following: Context: {LONG_context}, Other Inputs: {LONG_other_inputs}

If your inputs are sufficiently long, you could already be triggering context rot. Or, if your context is really long, you might not even fit in the model's context window.

With an RLM, on the other hand, the following context is provided:

Your inputs are the following: Context, Other Inputs.

You can access them inside your repl as variables. The variables are `context` and `other_inputs` respectively.

Previews:

context: {context[:100]}

other_inputs: {other_inputs[:100]}

Then we would prompt the LLM to write code in whatever language you have implemented the REPL in, which for both Alex's and DSPy's implementations is Python.

Then you run the code, append the output to history, and repeat.

Recursively Prompting LLMs in the REPL

The "Recursion" in "RLM" describes the LLM's ability to prompt itself, which we allow it to do in the REPL. This ability is exposed as a function.

In the case of dspy.RLM, we implement a single sub_llm() call. The main LLM can prepare a prompt and task a sub LLM with working on some information in the variable space. The results are returned in the variable space, as with any other function in a REPL, which the LLM can choose or choose not to tokenize.

Part of the beauty of this is that how the LLM splits up the work is undefined. Given a list of 10 long documents, the LLM could choose to split the work into 10 subcalls, or combine the work and parse the outputs, chunk sequentially, etc.

This kinda sounds like Claude Code, or the way most coding agents work. They fire off subagents to do work, then return the output to the main context. It's similar, but there's a crucial difference: Claude Code, out of the box, doesn't save outputs to a variable space that it can manipulate. For example, a Claude Code subagent returns a blob of text back into the context by default.

If Claude Code were to adopt a pattern where subagents write their results to files, we could consider this an RLM pattern.

And this turns out to be the difference maker. By providing the LLMs with a shared space to explore and store information outside the token space, RLMs unlock some incredible capabilities. Context rot is mitigated and tasks that can't fit into a single context window are suddenly addressable.

DSPy is the Easiest Way to Try RLMs

By extending DSPy with the RLM based paradigm, we are able to increase the capabilities and enforce some structure onto the RLM call.

For example, dspy.RLM gets to take advantage of the structure of the provided Signature. If your inputs include typed parameters or arbitrary data structures, that information is immediately provided to the RLM. When passing only strings, we find RLMs will spend the first few iterations just exploring the shape of the information. Signatures help us avoid this step.

Perhaps the best feature of dspy.RLM is that it works with all your existing Signatures. No need to tweak them, redesign your parameters, or issue special instructions. dspy.RLM is simply a new inference time strategy (just like Predict or ChainOfThought) that we can modularly swap in or out.

The only detail to note is RLMs require LLMs with strong reasoning and coding capabilities. The RLM strategy leverages the coding skills of larger models to solve long context problems - that's the unlock. GPT-5 and Opus versions work great with RLMs, though we continue to be surprised at how effective Kimi K2 is as well, despite its low cost and speed.

An Example RLM with DSPy

Creating an RLM with DSPy is easy:

signature = "logs, question -> answer"

rlm = dspy.RLM(signature)

result = rlm(

logs = all_my_logs

question = "Did anyone ask my agent about ice cream this week?"

)

The only line above that's specific to RLMs is dspy.RLM, which is the Module we use instead of Predict, ChainOfThought, or ReAct.

When you call a program using the RLM module, DSPy creates and manages a local, isolated Python sandbox using Deno.

You can install Deno with: curl -fsSL <a href="https://deno.land/install.sh" rel="nofollow">https://deno.land/install.sh</a> | sh. See the Deno Installation Docs for more details.

Your inputs are loaded into this environment as variables and the LLM is given a prompt DSPy prepares.

In our example above, we're using a string signature, but dspy.RLM works perfectly well with class-based signatures:

class CodebaseSubset(dspy.Signature):

"""

Find all of the files from the provided codebase that would be helpful for understanding the given feature.

"""

code_tree: dict = dspy.InputField()

feature: str = dspy.InputField()

relevant_filepaths: List[str] = dspy.OutputField

codebase_subsetter = dspy.RLM(CodebaseUnderstanding)

What's important to note here is that all the input variables - in this case code_tree and feature - are treated the same way.

If you've read about RLM and/or tried Alex's library, you may be used to the pattern where an RLM is set up with one very long context resource (loaded into the REPL, of course), that is then used to answer a given query. It's helpful to realize that we don't need to follow this pattern - one big context and one question - with dspy.RLM. Every input can be large or small, it doesn't matter: they're all loaded into the REPL.

And as usual, DSPy helpfully provides your typed outputs in the response object. No need to worry about data extraction:

result = codebase_subsetter(

code_tree = dspy_repo,

feature = "RLM"

)

rlm_relevant_files = result.relevant_filepaths

We can also pass in Python functions as tools the LLM can call within the REPL:

def web_search(search_term):

# Web search stuff

def github_search(search_term):

# Gh search stuff

codebase_subsetter = dspy.RLM(

CodebaseUnderstanding,

tools = [web_search, github_search]

)

For harder problems, RLMs can run for quite awhile. There's a few things we can do to keep a leash on the AI and keep our wallet intact.

First, we can adjust the budget we give the RLM. We have two levers here:

max_iterations: This specifies how many turns (comprised of reasoning and a REPL call) our RLM is given to complete the task. By default this is set to 10, but for many tasks 5 works well. Check your logs (or pass in verbose=true) and try a few runs to get a feel.max_llm_calls: This parameter defines how many sub-LLM calls the main RLM can fire off from the REPL. The reason this figure is separate from the parameter above is because the RLM can fire off many LLM calls from the same REPL turn.

Let me give you an example of max_llm_calls in practice:

In one task, after a couple iterations, the model has developed and tested a prompt that performed well when given a subset of the very large context. The main LLM did some quick math and realized the remaining 20 LLM calls it had budgeted was more than enough to process the entire large context, in 20 separate chunks. So it did.

The final lever we have to rein in costs is the ability to specify a different LLM as the sub_lm. For example:

codebase_subsetter = dspy.RLM(

CodebaseUnderstanding,

tools = [web_search, github_search],

max_iterations = 5,

max_llm_calls = 20,

sub_lm = gpt_5_mini

)

Just set up the LLM as you would any other DSPy LLM.

Optimize Your RLM

dspy.RLM can be optimized like any other DSPy program. Behind the scenes, it's handled similarly to dspy.ReAct: tool descriptions and signature instructions are compiled together into an instruction block that is then optimized with GEPA, MiPRO, or whatever.

The way dspy.RLM works with signatures and optimizers is consistent and modular. Existing programs run with RLMs just by switching out the module. This is the killer feature of DSPy: when there's a new optimizer or test-time strategy, your existing signatures should just work. Applied AI moves fast; the tasks you define shouldn't have to change.

Use Cases for RLMs

The main use case for an RLM is tasks that require reasoning across long contexts. Below are five problem shapes where RLMs shine - each involves some combination of long input, fuzzy structure, and multi-step reasoning that would be painful to decompose by hand.

Given a large set of documents, an RLM can search through to find the documents that fit a given criteria. Downstream applications include:

- Fuzzily filtering data or logs from a certain app or service

- Finding outlier reviews in a large dataset

- Scanning for incorrect traces from an LLM service

- Long context summarization/QA

An easy target use case for this is codebase QA. If you need to find all relevant files for a given feature, an RLM can do the grep et al styles of operations along with some things that are harder in bash such as AST parsing.

One of the primary benchmarks used by Alex is Browsecomp. Browsecomp is a multi-hop reasoning benchmark, requiring you to find a fact inside a corpus, then to chain multiple facts together from across the corpus in order to answer the ultimate claim.

Most complex QA tasks involve some kind of multi-hop reasoning, and we are encouraged by the improvements that RLMs can help offer in this area.

- Clustering and categorization

Given a long list of items, an RLM can investigate those items and come up with clusters based on what it sees. We see this as being especially useful in analyzing data from users - it could be reviews, traces, conversation intent, etc.

- Dynamic symbolic manipulation of long fuzzy contexts

It may be the case that you need to do some emergent decomposition based on fuzzy properties of the data. Let's say that in each document, you know that the date is referenced somewhere but you don't know where. It is very feasible to have an RLM investigate all the possible cases, and come up with a number of formats to extract, or even to use a sub_llm to extract the date from the file.